In the heated race to develop the world's most capable artificial intelligence, Apple has unveiled a powerful new language model called ReALM that the tech giant claims can outperform even OpenAI's vaunted GPT-4 on certain types of queries.

According to details published by Apple's AI research team, ReALM (short for Reference Resolution As Language Modeling) is designed to provide more accurate and context-aware responses by tapping into a user's device activity, visual surroundings, and conversational history. This extra "awareness" could allow ReALM to better understand the intent behind queries compared to traditional large language models trained only on text.

"ReALM provides more accurate answers because it has the ability to use ambiguous on-screen references and access both conversational and background information," the Apple researchers wrote. "It can look at the user's screen as part of its search process, as well as other running processes on the querying device, gaining insight into the train of thought that led to the query."

An AI That Accounts for Real-World Context

While AI assistants like GPT-4, Claude, and Anthropic's offerings have dazzled with their strong language understanding and multitasking capabilities, they remain fundamentally constrained by their lack of real-world context and embodied perception. ReALM aims to bridge that gap.

By grounding its responses in signals like the contents of a user's camera viewfinder, their recent app activity, geolocation data, and more, ReALM could theoretically provide more relevant and coherent responses compared to models operating solely based on the query's text prompt.

For example, if a user were to ask "What is the name of this product?" while looking at an electronic device through their iPhone's camera, ReALM could tap into that visual information and device data to provide a nuanced identification - something beyond the capabilities of existing language models limited to text inputs alone.

Apple Claims Outperformance on Key Tasks

The researchers say they have tested ReALM against multiple leading language models, including the latest GPT-4, and that their system outscored the competition on certain specialized benchmarks emphasizing multimodal reasoning and real-world grounding.

"They claim ReALM scored better than all other LLMs on certain types of tasks by leveraging the extra context," said AI researcher Ahmed Raza. "Of course, the full benchmarks and details will need third-party verification. But the overall premise of fusing language AI with multimodal signals is compelling and could push conversational AI further."

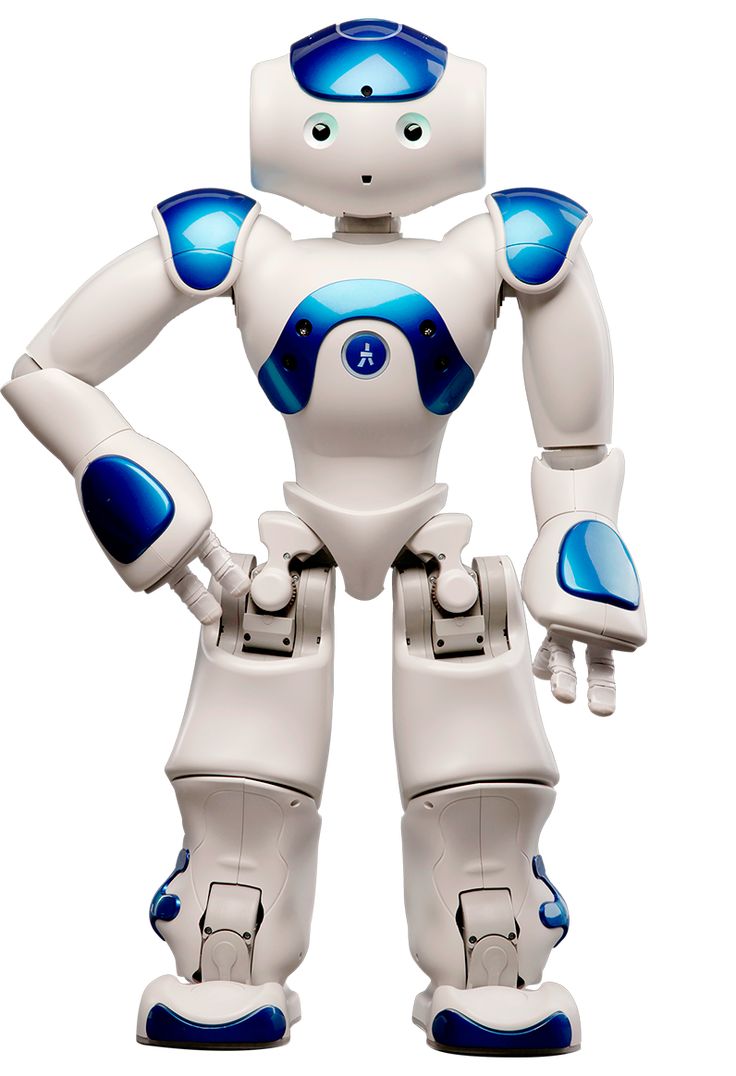

While ReALM's performance advantages may be narrow for now, the incorporation of grounded multi-sensor data could open opportunities for entirely new AI capabilities tuned for augmented reality, embodied robotics, and rich multi-tasking scenarios.

Powering an Enhanced Siri Experience

For consumers, Apple plans to integrate ReALM's context-aware smarts into its digital assistant Siri as part of this summer's anticipated iOS 18 release. The move could help Siri shed its long-running reputation as a simple voice control utility and reposition it as a true cognitive AI assistant on par with Alexa and Google's offerings.

"It will likely only be available to users who upgrade to iOS 18 when released," the Apple team noted. With ReALM's contextual awareness layered over Siri's existing voice AI, the assistant may finally have the tools to provide more coherent, multimodal responses that can perceive and adapt to a user's real-world situation.

While the full extent of ReALM's abilities remains to be seen, Apple's approach represents a tantalizing step toward AI systems that can fluidly interact with and understand the rich, multimodal nature of human experiences. As AI's horizons expand beyond pure language tasks, contextual grounding could prove a key differentiator in the race to develop radically smarter and more useful artificial intelligence.