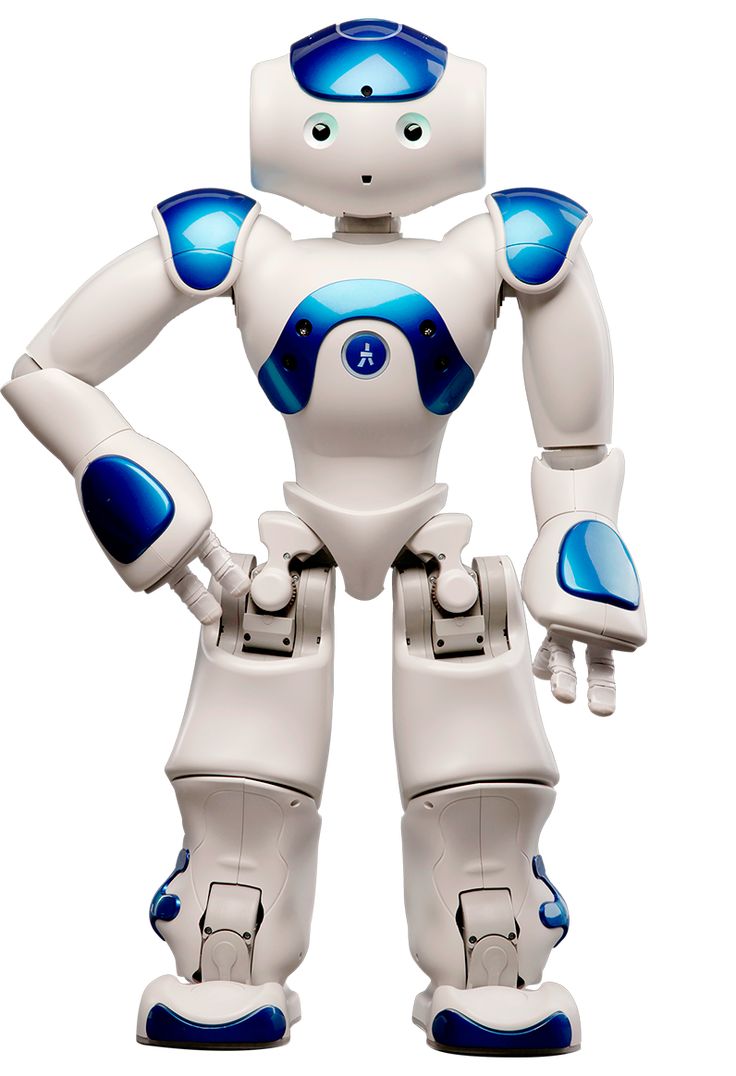

A team of Chinese engineers has created an ingenious multi-camera rig allowing autonomous drones, self-driving cars, and mobile robots to better sense complex surroundings. By coordinating multiple optics rather than relying on single conventional cameras, their sensor expands field of view for enhanced detail and precision critical to navigating our world.

Overcoming Limited Perspectives

Many current machine vision setups employ single traditional or depth-sensing cameras. But constrained fields of view inevitably miss important peripheral objects and lack stereoscopic data key to gauging real 3D relationships from imagery.

The breakthrough approach here strategically melds narrow overlapping feeds into a unified 120-degree mosaic view without blindspots. A central 4K RGB camera provides high-resolution perspective overlaying four lower-fidelity HD cameras angled to cover adjacent quadrants. Bespoke computer vision algorithms then stitch everything into a detailed environmental panorama.

Truly Binocular Capabilities Transform Perception

Unlike most multi-camera rigs simply combining feeds, the configuration allows actually realising proper binocular vision across a far wider area. This means calculating precise depth based on subtle view discrepancies for every pixel rather than just a small central sweet spot. Experiments confirm substantially enhanced measurement accuracy over other multi-camera methods, especially at wider angles.

The researchers believe that by better mimicking our own dual eyeballs’ light collection properties, machine perception can finally approach human spatial understanding capabilities. Miniaturised versions of the sensor might one day serve as standard environmental mapping tools for drones, household robots, and autonomous cars navigating daily life.

Practical machine learning likewise relies on realistic and plentiful sensory data. So moving forward, more insightful training datasets captured by such next-generation multi-perspective sensors should also translate to smarter algorithms powering everything from manipulation to augmented reality and more. Just as binocular vision unlocked new evolutionary potential for mammals, this innovation promises greatly advancing machine intelligence.