In the race to develop advanced humanoid robots, one company just achieved a major milestone - with some pivotal help from OpenAI's cutting-edge artificial intelligence.

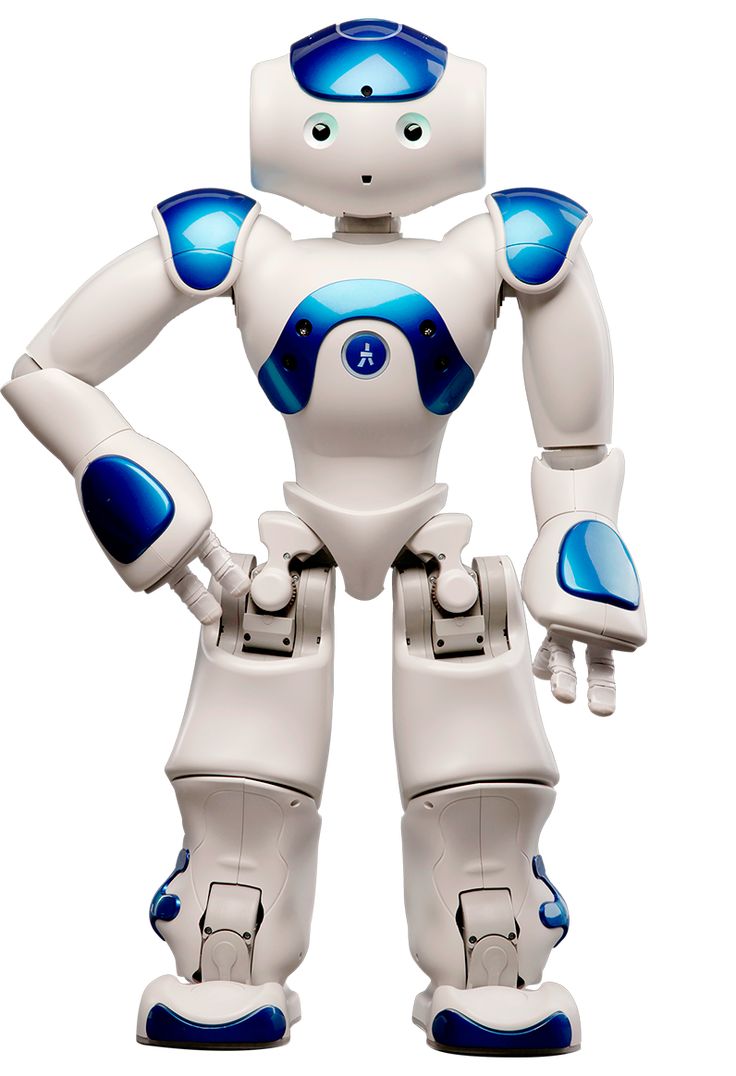

Figure, a robotics startup that has raised over $675 million from investors like Oprah Winfrey and Sumit Singh, recently unveiled new capabilities for its bipedal robot called Figure 01. Thanks to a collaboration with OpenAI, the robot has gained the ability to understand natural language commands, perceive its environment, and even complete household tasks autonomously.

In a video demonstrating the robot's skills, Figure 01 is shown retrieving an apple when asked if it has anything to eat. It can visually identify objects and describe them in detail. Perhaps most impressively, the humanoid robot clears dishes off a table and loads them into a dishwasher - all without being instructed to do so.

"This is a major step towards our goal of creating robots that can truly help around the home," said Figure CEO and co-founder Brett Adcock. "Figure 01 is no longer just responding to commands, but understanding its surroundings and making decisions autonomously, like a human assistant would."

The underlying technology powering these newfound capabilities is OpenAI's vision and language models integrated directly into the robot's camera systems. Using advanced AI, Figure 01 can analyze visual data, comprehend questions and commands related to that data, and then determine the appropriate course of action.

While Adcock did not specify whether the company used OpenAI's latest GPT-4 model or a custom version, he noted that additional neural networks govern the robot's overall perception, decision-making, and physical actions based on that processed visual and verbal data.

This combination of vision, language understanding, and autonomous execution represents a breakthrough in general robot intelligence and the first step towards humanoid home assistants that can interact seamlessly.

"We've proven our robot can navigate the real world and handle complex scenarios, thanks to OpenAI's state-of-the-art AI models," said Adcock. "But this is just a prototype - there's still a lot of work to do on speed, efficiency, safety and other factors before a full consumer product launch."

Despite the impressive demo, Figure 01 still moves quite slowly compared to humans and would require far more development for reliable, large-scale deployment. But by cracking the code of artificial general intelligence for robots, Figure and OpenAI have cleared a major hurdle on the path to user-friendly humanoid assistants in the home.

For the first time, we're seeing robots that can truly see, understand, decide, and then act in a completely autonomous way - bringing us one step closer to the long-promised robotic helper fantasy. With AI opening the door, the era of humanoid home robots may actually be within reach.