If you've ever played soccer with a robot, this is a familiar feeling to you. The sun shines on your face, and the air is saturated with the smell of grass. You look around. A four-legged robot hurries to you, resolutely driving the ball.

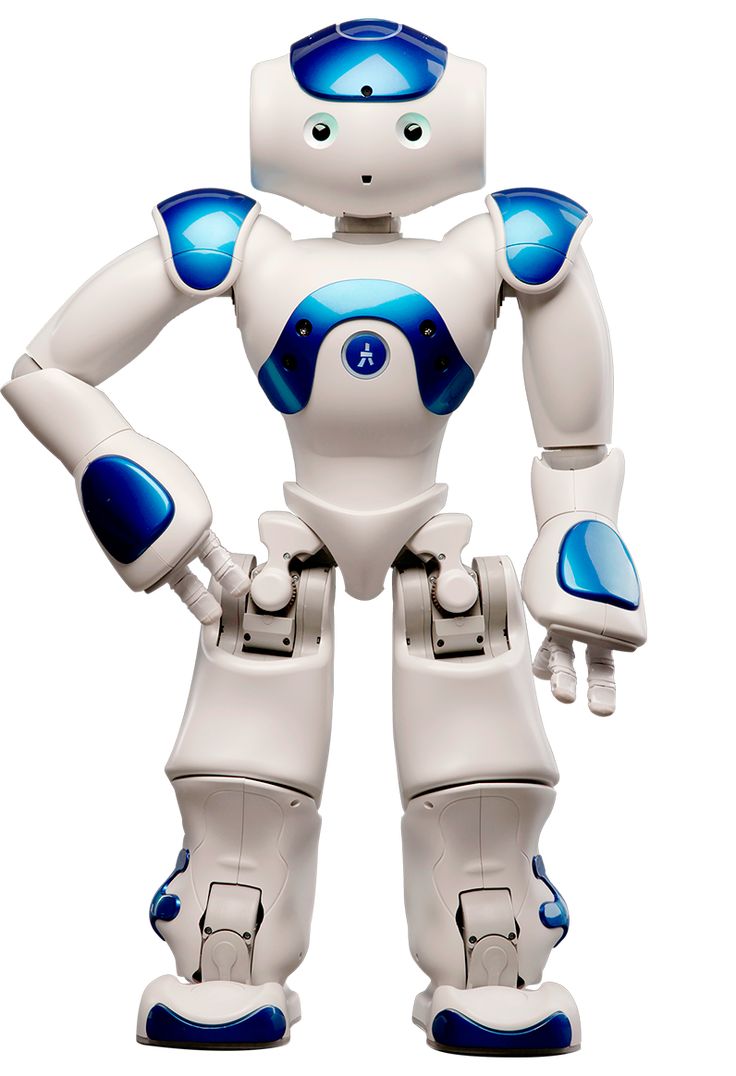

Although the bot does not demonstrate the level of Lionel Messi's abilities, nevertheless, it is an impressive dribbling system in the wild. Researchers from the Massachusetts Institute of Technology's Incredible Artificial Intelligence Laboratory, part of the Computer Science and Artificial Intelligence Laboratory (CSAIL), have developed a robotic system on legs that can guide a soccer ball in the same conditions as humans. The bot used a combination of built-in sensors and calculations to navigate various natural landscapes, such as sand, gravel, mud and snow, and adapted to their different effects on the movement of the ball. Like any keen athlete, the “DribbleBot” could get up and return the ball after a fall.

Programming robots to play football has been an active area of research for some time. However, the team wanted to learn how to automatically activate the legs during dribbling, so that it would be possible to detect difficult-to-scenario skills of responding to various landscapes, such as snow, gravel, sand, grass and sidewalk. Entrance, simulation.

The robot, the ball and the terrain are inside a simulation — a digital double of the natural world. You can upload other assets to the bot and set physics parameters, and then from there it handles direct dynamics modeling. Four thousand versions of the robot are simulated in parallel in real time, which allows you to collect data 4000 times faster than using just one robot. That's a lot of data.

The robot starts without knowing how to drive the ball — it just gets rewarded when it does it, or negative reinforcement when it makes a mistake. Thus, he is, in fact, trying to figure out what sequence of forces he should apply to his legs. "One aspect of this reinforcement learning approach is that we have to develop a good reward to make it easier for the robot to learn successful dribbling behavior," says MIT graduate student Gabe Margolis, who led the work together with Yandong Ji, a researcher at Improbable. Artificial Intelligence Laboratory. "As soon as we developed this award, it's time to practice the robot: in real time it's a couple of days, and in the simulator it's hundreds of days. Over time, he learns to manipulate the football better and better. to match the desired speed."

The bot could also navigate unfamiliar terrain and recover from falls thanks to the recovery controller that the team had built into its system. This controller allows the robot to get up after a fall and switch back to its dribbling controller to continue chasing the ball, helping it cope with distribution disturbances and landscapes.

"If you look around today, most robots are wheeled. But imagine that there is a scenario of a natural disaster, flood or earthquake, and we want robots to help people in the search and rescue process. We need cars to navigate the terrain. They are not flat, and wheeled robots cannot move through these landscapes," says Pulkit Agrawal, professor at the Massachusetts Institute of Technology, chief researcher at CSAIL and director of Improbable AI Lab. modern robotic systems," he adds. "Our goal in developing algorithms for robots on their feet is to provide autonomy in complex and complex landscapes that are currently inaccessible to robotic systems."

The fascination with quadrupedal robots and football has deep roots — Canadian professor Alan Mackworth first outlined this idea in an article entitled "The Vision of Robots" presented at the VI-92 conference in 1992. “, which led to discussions about using football to promote science and technology. A year later, the project was launched under the name Robot J-League, and a global hype immediately followed. Shortly after that, "RoboCup" was born.

Compared to walking alone, driving a soccer ball imposes more restrictions on the movement of the DribbleBot and on which terrain it can cross. The robot must adapt its movement to apply forces to the ball to guide the ball. The interaction between the ball and the landscape may differ from the interaction between the robot and the landscape, such as thick grass or sidewalk. For example, a soccer ball will experience a drag force on grass that is not present on the sidewalk, and the tilt will apply an acceleration force, changing the typical trajectory of the ball. However, the bot's ability to move across different landscapes is often less affected by these differences in dynamics — as long as it doesn't slide — so a football test can be sensitive to changes in the landscape, as opposed to movement.

"Previous approaches simplified the dribbling problem by making the assumption of modeling a flat solid surface. The movement is also designed to be more static; the robot is not trying to run and manipulate the ball at the same time," says Ji. "That's where a more complex dynamic comes into the management problem. We have solved this problem by expanding the latest advances that have improved outdoor movement into this challenging task that combines aspects of movement and dexterous manipulation."

As for the hardware, the robot has a set of sensors that allow it to perceive the environment, allowing it to feel where it is, "understand" its position and "see" part of its environment. He has a set of drives that allow him to apply forces and move himself and objects. Between the sensors and the actuators is a computer, or "brain", which is tasked with converting sensor data into actions that it will perform with the help of motors. When the robot runs through the snow, it does not see the snow, but it can feel it with its motor sensors. But football is a more difficult feat than walking, so the team used cameras on the robot's head and body for a new sensory modality of vision in addition to new motor skills. And then — dribbling.

"Our robot can go into the wild because it has all the sensors, cameras and calculations on board. It took some innovation to get the whole controller to fit this onboard computing," Margolis says. "This is one of the areas where training helps, because we can launch a light neural network and teach it to process the noise sensor data observed by a moving robot. This is in sharp contrast to most modern robots: usually the robot arm is mounted on a fixed base and sits on a workbench with a giant computer connected directly to it. There is no computer or sensors in the manipulator! So it's all heavy, it's hard to move it."

We still have a long way to go to make these robots as agile as their counterparts in nature, and some terrain has been challenging for DribbleBot. Currently, the controller is not trained to work in simulated environments involving slopes or stairs. The robot does not perceive the geometry of the terrain; it is only an assessment of its contact properties of the material, such as friction. For example, if there is a step, the robot will get stuck — it will not be able to lift the ball over the step — an area that the team wants to explore in the future. The researchers are also happy to apply the lessons learned during the development of DribbleBot to other tasks, which include combined movement and manipulation of objects, rapid movement of various objects from place to place using legs or hands.

"DribbleBot is an impressive demonstration of the feasibility of such a system in a complex problem space that requires dynamic control of the whole body," says Vikash Kumar, a researcher at Facebook AI Research who was not involved in the work. "What's impressive about DribbleBot is that all sensorimotor skills are synthesized in real time in an inexpensive system using embedded computing resources. Despite the fact that he demonstrates remarkable dexterity and coordination, this is just the beginning of a new era. The game has started!”

The research is supported by the DARPA Machine Common Sense Program, the Watson MIT-IBM Artificial Intelligence Laboratory, the Institute for Artificial Intelligence and Fundamental Interactions of the National Science Foundation, the US Air Force Research Laboratory and the US Air Force Artificial Intelligence Accelerator. The report on the work will be presented at the IEEE International Conference on Robotics and Automation (ICRA) in 2023.