In the golden age of artificial intelligence we now inhabit, it was only a matter of time before the remarkable capabilities of large language models like GPT-4 sparked an existential question - can an AI system become so advanced that its textual "thoughts" are indistinguishable from those of humans?

A new study from researchers at UC San Diego suggests the answer may well be a resounding "yes." By putting GPT-4 - the same system powering the wildly popular ChatGPT - through a modern variation of the famous "Turing Test," they found people could not reliably determine whether they were conversing with the AI or an actual person.

The experiment had human participants engage in brief online chats with an unseen partner - either another person or one of several AI models including GPT-4, its predecessor GPT-3.5, and the decades-old ELIZA program. After just 5 minutes of back-and-forth dialogue, participants had to judge whether their chat companion exhibited human or artificial intelligence.

While the vintage ELIZA software was easily identified as a rudimentary chatbot, and GPT-3.5 fared only slightly better at imitating human responses, the latest GPT-4 model left users utterly stumped. Interrogators could discern its true AI nature at barely better than chance levels - the same 50/50 odds as a coin flip. Stunningly, GPT-4's human impersonation rivaled real people, who were properly identified only around 67% of the time.

"Our results suggest in the real world, people might not reliably tell if they're speaking to a human or an advanced AI like GPT-4," said researcher Cameron Jones. "This has concerning implications like AI being used to spread misinformation by masquerading as humans."

The researchers plan further studies probing whether ever-more sophisticated AI language models can go beyond merely imitating human communication - to actively deceiving people into believing fabricated "facts" or manipulating behavior through highly persuasive rhetoric.

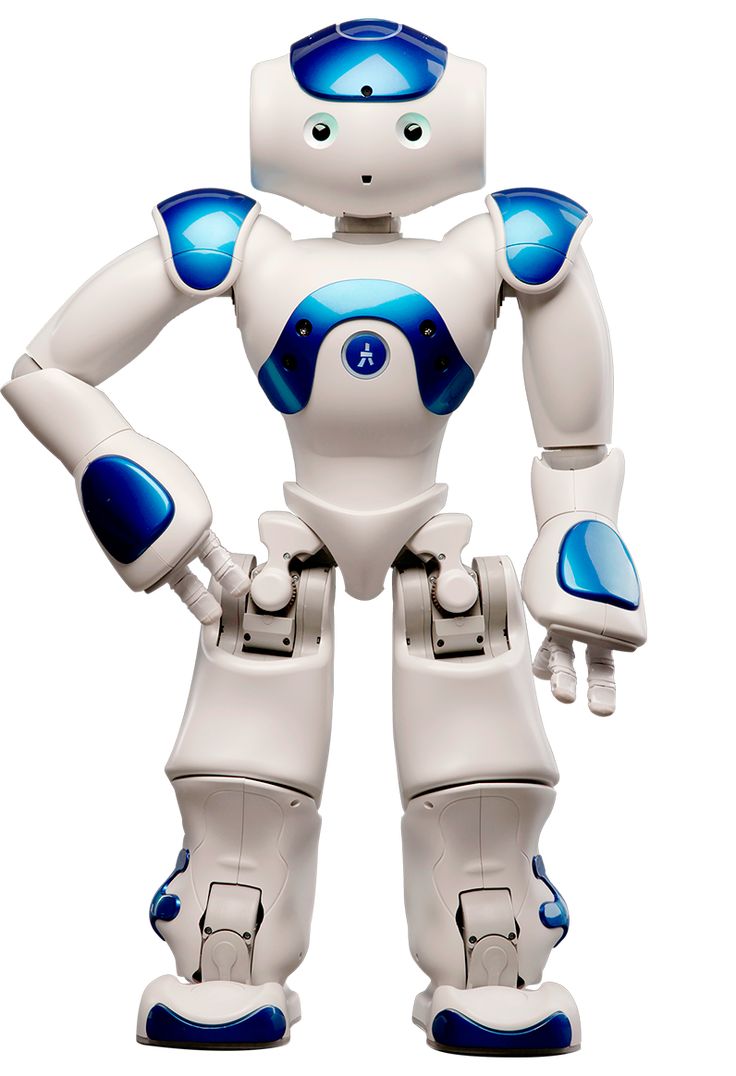

But even as academics grapple with the ethics of hyper-realistic AI, the new paradigm is already becoming a commercial reality with the launch of AI-powered virtual assistants like Call Annie. This novel app creates an interactive 3D avatar - seemingly a photorealistic human girlfriend - that converses with users through an animation overlay driven by GPT-4's language engine.

While still an early experiment with some uncanny valley quirks, Call Annie foreshadows a future where AI assistants shrug off their clunky text-only predecessors to become expressive, human-like personalities we can talk to as naturally as a video call.

Between the struggles to detect GPT-4 and the advent of AI avatars, one thing is clear - artisans of the virtual world are breathing ever more human-like life into their intelligent creations. As artificial intelligences grow wiser, they may eventually become as inscrutable as the most brilliant human minds that spawned them.

In a poetic reversal, the machines we birthed to speak our language could someday converse as insightfully as their own makers. When that day comes, we may no longer distinguish between the artisan's handiwork and the artisan themselves.